- #Brew install apache spark how to

- #Brew install apache spark driver

- #Brew install apache spark manual

- #Brew install apache spark full

- #Brew install apache spark software

Note: Both slaves and spark-env files will be already present in the conf directory, you will have to rename them from. Open /conf/slaves file in a text editor and add “localhost” on a newline.Īdd following to your /conf/spark-env.sh file:Įxport SPARK_WORKER_DIR=/PathToSparkDataDir/

#Brew install apache spark manual

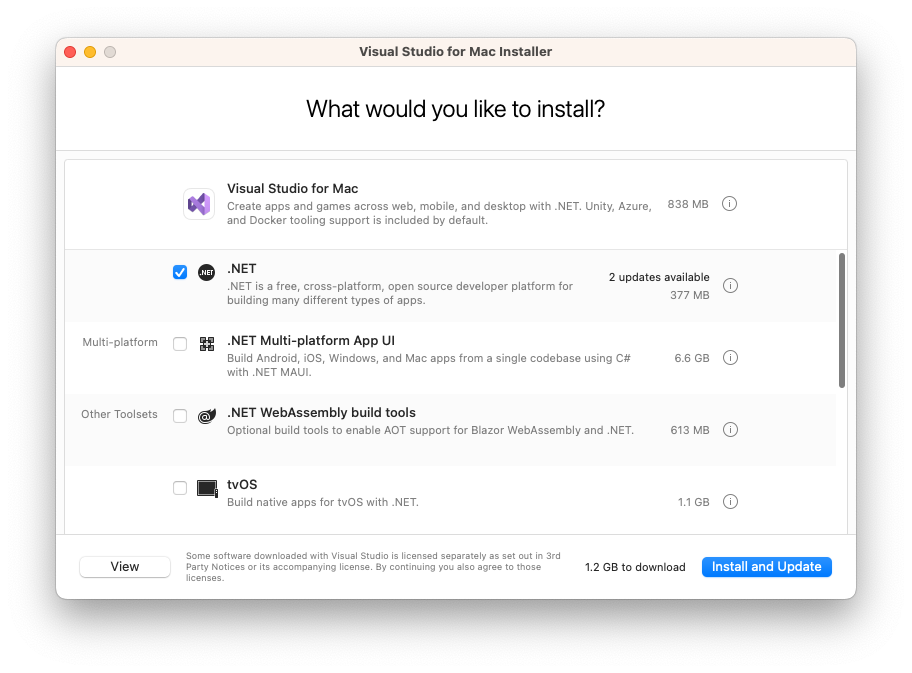

Once you have the installed the binaries either using manual download method or via brew then proceed to next steps that will help us setup a local spark cluster with 2 workers and 1 master. Setup the SPARK_HOME now: vi ~/.bashrcĮxport SPARK_HOME=/usr/loca/Cellar/apache-spark/$version/libexecĮxport PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin You spark binaries/package gets installed in /usr/local/Cellar/apache-spark folder. If you have brew configured then all you need to do is just run: brew install apache-spark We will setup a cluster which has 2 slave nodes. We will need the spark cluster setup as we will be submitting our Java Spark jobs to the cluster. Again, there are plenty of good blogs covering this topic, please refer one of them.

If you wish to run your pom.xml from command line then you need it on your OS as well. You are good if you have Maven installed in your Eclipse alone. There are plenty of Java install blogs, please refer one of them for installing and configuring Java either on Mac or Windows.Īs we will be focussing on Java API of Spark, I’d recommend installing latest Eclipse IDE and Maven packages too. Scala install is not needed for spark shell to run as the binaries are included in the prebuilt spark package. spark-shell.cmd and If everything goes fine you have installed Spark successfully. spark-shell and you should be in the scala command prompt as shown in the following pictureįor windows, you will need to extract the tgz spark package using 7zip, which can be downloaded freely. Either double click the package or run tar -xvzf /path/to/yourfile.tgz command which will extract the spark package.

All Worker nodes will be attached to the Master 1 : Once all slave nodes are running, reload your master browser page. tar.gz file by executing the command bellow :

#Brew install apache spark software

On each node, extract the software and remove the. Make sure to repeat this step for every node. On each node, execute the following command : If you want to choose the version 2.4.0, you need to be careful! Some software (like Apache Zeppelin) don’t match this version yet (End of 2018).įrom Apache Spark’s website, download the tgz file :

#Brew install apache spark how to

If you don’t remenber how to do that, you can check the last section ofįor the sake of stability, I chose to install version 2.3.2.

Make sure an SSH connection is established. Connect via SSH on every node except the node named Zookeeper : Java should be pre-installed on the machines on which we have to run Spark job. Standalone mode is good to go for developing applications in Spark.

#Brew install apache spark driver

Both driver and worker nodes run on the same machine. This is the simplest way to deploy Spark on a private cluster. Along with that, it can be configured in standalone mode.įor this tutorial, I choose to deploy Spark in Standalone Mode. Spark can be configured with multiple cluster managers like YARN, Mesos, etc.

#Brew install apache spark full

The goal of this final tutorial is to configure Apache-Spark on your instances and make them communicate with your Apache-Cassandra Cluster with full resilience.